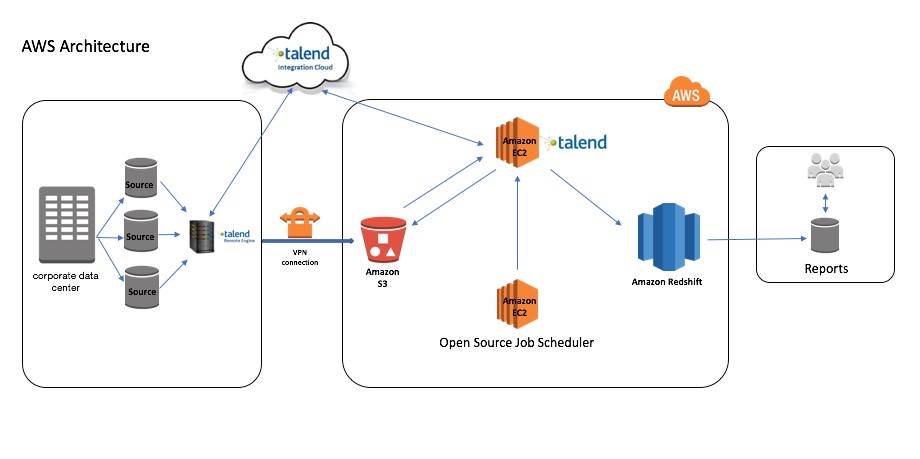

The number of files should be a multiple of the number of slices in your cluster. When splitting your data files, ensure that they are of approximately equal size – between 1 MB and 1 GB after compression. In the example shown below, a single large file is loaded into a two-node cluster, resulting in only one of the nodes, “Compute-0”, performing all the data ingestion: As a result, the process runs only as fast as the slowest, or most heavily loaded, slice. When you load the data from a single large file or from files split into uneven sizes, some slices do more work than others. When you load data into Amazon Redshift, you should aim to have each slice do an equal amount of work. For example, each DS2.XLARGE compute node has two slices, whereas each DS2.8XLARGE compute node has 16 slices. The number of slices per node depends on the node type of the cluster. Each node is further subdivided into slices, with each slice having one or more dedicated cores, equally dividing the processing capacity. COPY data from multiple, evenly sized filesĪmazon Redshift is an MPP (massively parallel processing) database, where all the compute nodes divide and parallelize the work of ingesting data. Monitor daily ETL health using diagnostic queries.ġ.Use Amazon Redshift Spectrum for ad hoc ETL processing.

Use UNLOAD to extract large result sets.Perform multiple steps in a single transaction.Use workload management to improve ETL runtimes.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed